Contextual AI Generative AI with Weaviate

v1.34.0Weaviate's integration with Contextual AI's APIs allows you to access their models' capabilities directly from Weaviate.

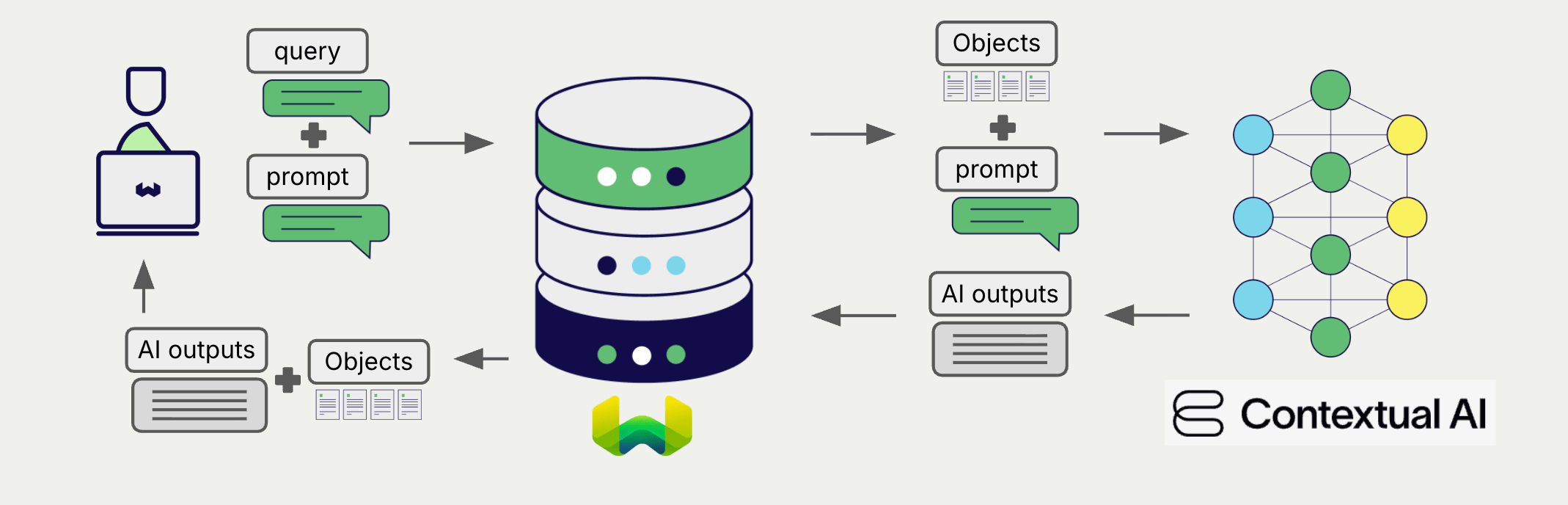

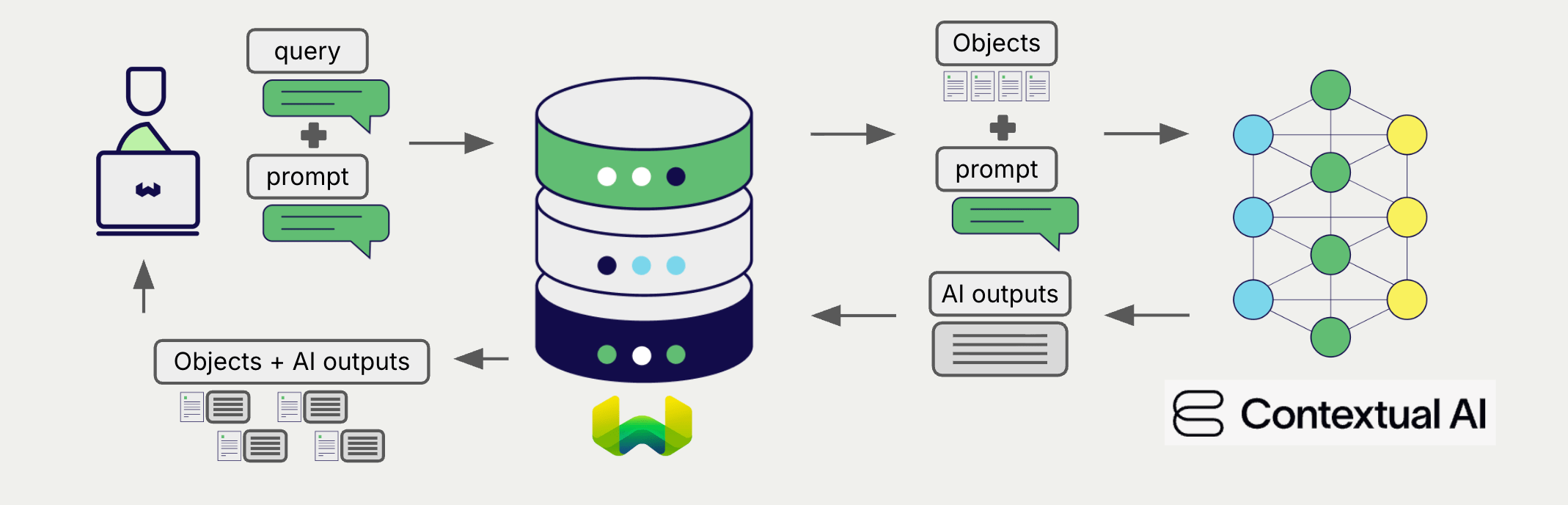

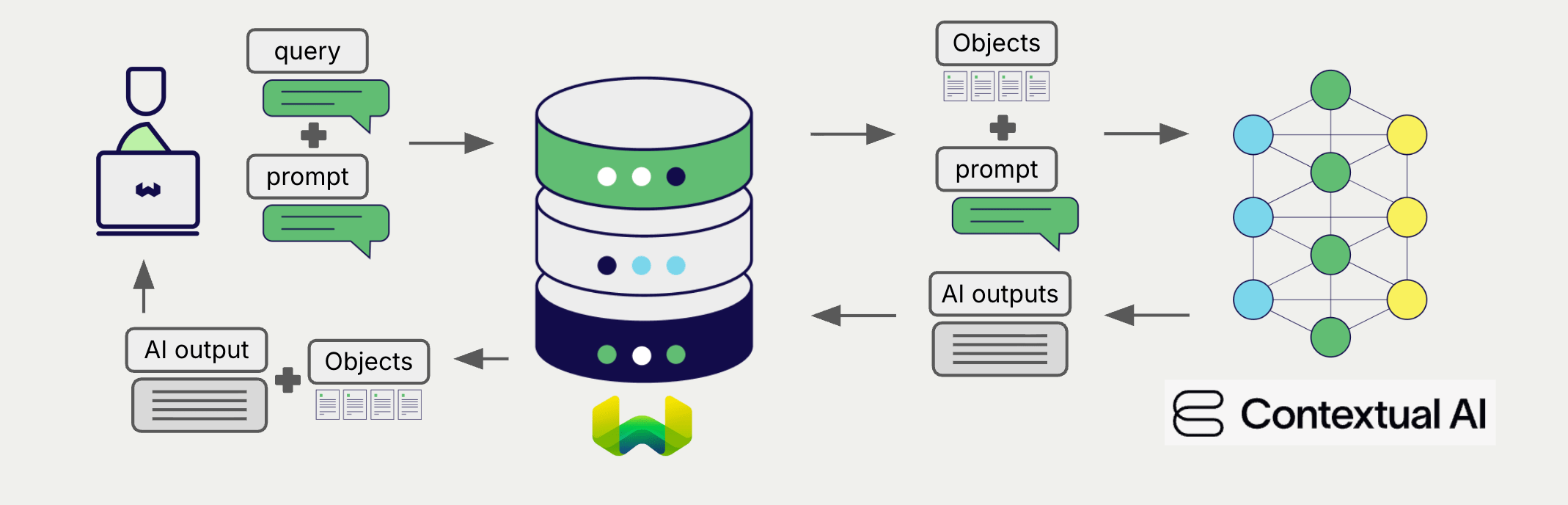

Configure a Weaviate collection to use a generative AI model with Contextual AI. Weaviate will perform retrieval augmented generation (RAG) using the specified model and your Contextual AI API key.

More specifically, Weaviate will perform a search, retrieve the most relevant objects, and then pass them to the Contextual AI generative model to generate outputs.

Requirements

Weaviate configuration

Your Weaviate instance must be configured with the Contextual AI generative AI integration (generative-contextualai) module.

For Weaviate Cloud (WCD) users

This integration is enabled by default on Weaviate Cloud (WCD) instances.

For self-hosted users

- Check the cluster metadata to verify if the module is enabled.

- Follow the how-to configure modules guide to enable the module in Weaviate.

API credentials

You must provide a valid Contextual AI API key to Weaviate for this integration. Go to Contextual AI to sign up and obtain an API key.

Provide the API key to Weaviate using one of the following methods:

- Set the

CONTEXTUAL_API_KEYenvironment variable that is available to Weaviate. - Provide the API key at runtime, as shown in the examples below.

If a snippet doesn't work or you have feedback, please open a GitHub issue.

import weaviate

from weaviate.classes.init import Auth

import os

# Recommended: save sensitive data as environment variables

contextual_key = os.getenv("CONTEXTUAL_API_KEY")

headers = {

"X-ContextualAI-Api-Key": contextual_key,

}

client = weaviate.connect_to_weaviate_cloud(

cluster_url=weaviate_url, # `weaviate_url`: your Weaviate URL

auth_credentials=Auth.api_key(weaviate_key), # `weaviate_key`: your Weaviate API key

headers=headers

)

# Work with Weaviate

client.close()

Configure collection

A collection's generative model integration configuration is mutable from v1.25.23, v1.26.8 and v1.27.1. See this section for details on how to update the collection configuration.

Configure a Weaviate index as follows to use a Contextual AI generative AI model:

If a snippet doesn't work or you have feedback, please open a GitHub issue.

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.contextualai()

# Additional parameters not shown

)

Select a model

You can specify one of the available models for Weaviate to use, as shown in the following configuration example:

If a snippet doesn't work or you have feedback, please open a GitHub issue.

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.contextualai(

model="v2"

)

# Additional parameters not shown

)

You can specify one of the available models for Weaviate to use. The default model is used if no model is specified.

Generative parameters

Configure the following generative parameters to customize the model behavior.

If a snippet doesn't work or you have feedback, please open a GitHub issue.

from weaviate.classes.config import Configure

client.collections.create(

"DemoCollection",

generative_config=Configure.Generative.contextualai(

# # These parameters are optional

# model="v2",

# temperature=0.7,

# max_tokens=1024,

# top_p=0.9,

# system_prompt="You are a helpful assistant"

# avoid_commentary=True,

# knowledge=["Custom knowledge override", "Additional context"],

)

# Additional parameters not shown

)

For further details on model parameters, see the Contextual AI API documentation.

If a parameter is not specified, Weaviate uses the server-side default for that parameter. They are:

- model =

"v2" - temperature =

0.0 - topP =

0.9 - maxNewTokens =

1024 - systemPrompt =

"" - avoidCommentary =

false - knowledge =

nil

Select a model at runtime

Aside from setting the default model provider when creating the collection, you can also override it at query time.

If a snippet doesn't work or you have feedback, please open a GitHub issue.

from weaviate.classes.config import Configure

from weaviate.classes.generate import GenerativeConfig

collection = client.collections.use("DemoCollection")

response = collection.generate.near_text(

query="A holiday film",

limit=2,

grouped_task="Write a tweet promoting these two movies",

generative_provider=GenerativeConfig.contextualai(

# # These parameters are optional

# model="v2",

# temperature=0.7,

# max_tokens=1024,

# top_p=0.9,

# system_prompt="You are a helpful assistant"

# avoid_commentary=True,

# knowledge=["Custom knowledge override", "Additional context"],

),

# Additional parameters not shown

)

Header parameters

You can provide the API key as well as some optional parameters at runtime through additional headers in the request. The following headers are available:

X-ContextualAI-Api-Key: The Contextual AI API key.

Any additional headers provided at runtime will override the existing Weaviate configuration.

Provide the headers as shown in the API credentials examples above.

Retrieval augmented generation

After configuring the generative AI integration, perform RAG operations, either with the single prompt or grouped task method.

Single prompt

To generate text for each object in the search results, use the single prompt method.

The example below generates outputs for each of the n search results, where n is specified by the limit parameter.

When creating a single prompt query, use braces {} to interpolate the object properties you want Weaviate to pass on to the language model. For example, to pass on the object's title property, include {title} in the query.

If a snippet doesn't work or you have feedback, please open a GitHub issue.

collection = client.collections.use("DemoCollection")

response = collection.generate.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

single_prompt="Translate this into French: {title}",

limit=2

)

for obj in response.objects:

print(obj.properties["title"])

print(f"Generated output: {obj.generated}") # Note that the generated output is per object

Grouped task

To generate one text for the entire set of search results, use the grouped task method.

In other words, when you have n search results, the generative model generates one output for the entire group.

If a snippet doesn't work or you have feedback, please open a GitHub issue.

collection = client.collections.use("DemoCollection")

response = collection.generate.near_text(

query="A holiday film", # The model provider integration will automatically vectorize the query

grouped_task="Write a fun tweet to promote readers to check out these films.",

limit=2

)

print(f"Generated output: {response.generative.text}") # Note that the generated output is per query

for obj in response.objects:

print(obj.properties["title"])

References

Available models

Currently, the following Contextual AI generative AI models are available for use with Weaviate:

v1v2(default)

Further resources

Other integrations

Code examples

Once the integrations are configured at the collection, the data management and search operations in Weaviate work identically to any other collection. See the following model-agnostic examples:

- The How-to: Manage collections and How-to: Manage objects guides show how to perform data operations (i.e. create, read, update, delete collections and objects within them).

- The How-to: Query & Search guides show how to perform search operations (i.e. vector, keyword, hybrid) as well as retrieval augmented generation.

References

- Contextual AI Generate API documentation

Questions and feedback

If you have any questions or feedback, let us know in the user forum.